AI Product Strategy

Before You Build AI Into Your Product — Data Readiness, Build vs. Buy, and the Cost Nobody Budgets For

April 2026 / 14 min read

Posted by Tarek Fawaz

Series — Part 3 of 3

This is the third post in a series on AI adoption and engineering leadership. Part 1: Agentic Spec-Driven Development. Part 2: Building With AI vs. Building For AI.

The Most Expensive AI Feature Is the One You Shouldn't Have Built

In my previous post on Building With AI vs. Building For AI, I deliberately kept the "Building For AI" path thin. The reason was simple: most organizations aren't ready for it yet. They should start with Building With AI — empowering their teams — before they attempt to ship AI capabilities to customers.

But for those who are ready, or who think they are, this post is the deeper playbook. It covers the three frameworks I suggest when evaluating whether an AI feature belongs in a product, and how to build it without burning money, trust, or engineering capacity.

The three questions every AI product decision must answer:

- Is our data ready?: The foundation determines everything that follows.

- Should we build or buy?: The wrong choice is expensive in both directions.

- What does this actually cost?: Not just the compute — everything.

Getting any of these wrong is expensive. Getting all three wrong is how you end up twelve months later with a feature nobody uses, a data pipeline nobody trusts, and an invoice nobody budgeted for.

Framework 1: Data Readiness Assessment

As DJ Patil, former U.S. Chief Data Scientist, once put it: "Data is the new oil? No. Data is the new soil." You don't just extract value from it — you cultivate it. And if the soil is poisoned, nothing you grow will be safe to consume.

Before you evaluate a single model, vendor, or architecture, ask whether your data can support what you're planning to build. This isn't a theoretical exercise — it's a structured assessment with pass/fail criteria.

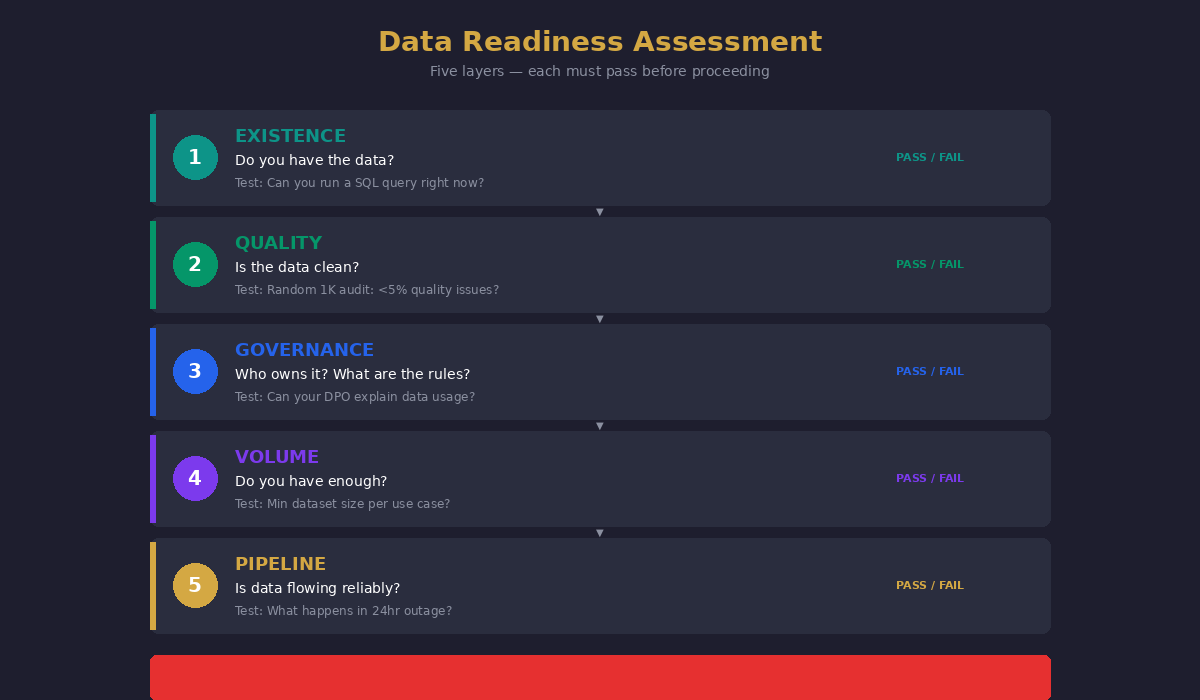

The Five-Layer Data Readiness Model

Layer 1 — Existence: Do you have the data?

This sounds obvious, but I've seen organizations plan AI features around data they assumed existed but didn't. You need to physically verify that the training data, the inference data, and the feedback loop data all exist in a queryable, accessible form. Not "we have it somewhere in a legacy system." Not "the vendor said they'd provide it." Actually there, actually accessible.

The test

Can you run a SQL query right now and get a representative sample? If no, you're not ready.

Layer 2 — Quality: Is the data clean?

Garbage in, hallucinations out. But "clean" means different things for different AI use cases. For a recommendation engine, missing values in non-critical fields might be acceptable. For a medical decision support system, a 2% error rate in lab values could be dangerous.

Define your quality thresholds per use case, not globally. Measure completeness (what percentage of records have all required fields?), accuracy (how do you validate that values are correct?), freshness (how old is the data, and does staleness matter?), and consistency (do the same entities have the same identifiers across systems?).

The test

Take a random 1,000-record sample and manually audit it. If more than 5% of records have quality issues that would affect model output, you need a data cleaning phase before you need a model.

Layer 3 — Governance: Who owns it and what are the rules?

Data governance isn't a compliance checkbox — it's the foundation that determines whether your AI feature survives contact with reality. You need clear answers to: who owns each data source? Who can approve its use for model training? What are the privacy implications (GDPR, CCPA, industry-specific regulations)? What happens when a customer requests deletion — can you remove their data from a trained model? What's your bias audit process?

If your governance is immature, every AI feature you build carries regulatory risk that scales with adoption. The more customers use it, the more exposed you are.

The test

Can your data protection officer explain exactly what data your AI feature uses, where it comes from, and what happens if a customer exercises their right to deletion? If the answer involves the phrase "we'd need to check," you're not ready.

Layer 4 — Volume: Do you have enough?

Small datasets produce confident but wrong answers. The minimum viable dataset depends entirely on your use case. A classification model might work with 10,000 labeled examples. A generative model fine-tuned for your domain might need millions of tokens of high-quality text. A recommendation engine might need years of behavioral data.

The test

Consult with a data scientist (not a vendor) on the minimum dataset size for your specific use case. If you're below that threshold, either invest in data collection first or use a pre-trained model and evaluate whether its general knowledge is sufficient without fine-tuning.

Layer 5 — Pipeline: Is the data flowing reliably?

A model is only as good as the data feeding it in production. If your data pipeline breaks, your AI feature breaks — but unlike a broken API that returns an error, a broken AI pipeline might return confidently wrong answers. That's worse.

Assess: is your data pipeline monitored? Do you have alerts for data drift, schema changes, and delivery failures? What's your recovery time when the pipeline breaks? Is there a fallback experience when AI can't produce a reliable result?

The test

Simulate a 24-hour data pipeline outage. What happens to your AI feature? If the answer is "it keeps serving stale predictions without anyone noticing," you have a critical gap.

The Readiness Verdict

Score each layer: Ready / Partially Ready / Not Ready. If any layer scores "Not Ready," that's your first investment — not the model, not the vendor, not the GPU cluster. The data foundation comes first.

AI is a rocket, but data is the fuel. Without fuel, you have a useless rocket. With the wrong fuel, you crash.

Framework 2: Build vs. Buy Decision Tree

Andrew Ng, founder of DeepLearning.AI, has emphasized: "Don't start with the solution. Start with the problem, then ask whether AI is the right tool." The same logic applies to build vs. buy — the question isn't whether you can build it, but whether you should.

Once your data is ready, the next question is whether to build your own AI capability or buy it. This decision is more nuanced than most organizations realize — and the wrong choice is expensive in both directions.

The Decision Axes

The build-vs-buy decision isn't binary. It's a spectrum across four axes:

Axis 1 — Differentiation Value. Does this AI capability create competitive advantage that's unique to your business? If the AI feature is core to your product differentiation — the thing customers choose you for over competitors — building gives you control, customization, and IP ownership. If the AI feature is supporting infrastructure (chatbot for customer service, document classification, code review) — buying is almost always better. The test question: if a competitor launches the same AI feature tomorrow using the same vendor you're considering, does that eliminate your competitive advantage? If yes, build. If no, buy.

Axis 2 — Data Sensitivity. Where does your data live, and where is it allowed to go? If your data is regulated (healthcare, finance, government), sending it to a third-party API introduces compliance complexity. If your data is your competitive moat, sharing it with a vendor who trains on customer data is a strategic risk. If your data is general-purpose and non-sensitive, buying from a vendor with a strong API is the pragmatic choice.

Axis 3 — Maintenance Commitment. Building means maintaining. Models drift. Data distributions change. New edge cases appear. Evaluation pipelines need updating. Prompt engineering needs iteration. Do you have the team to sustain this for years, not months? Many organizations underestimate this. Building an AI feature is a 3-month project. Maintaining an AI feature is a multi-year commitment. If you can't staff the maintenance, buy — even if building would be technically superior.

Axis 4 — Time to Value. How urgently does the business need this capability? If the window is 2-3 months, buy a solution and customize it. Building from scratch takes 6-12 months minimum for anything production-grade. If the window is 6-12 months and the differentiation value is high, build — but with a clear phase plan and milestone gates.

The Decision Matrix

Map your use case against these four axes:

Build

High differentiation + sensitive data + team capacity + longer timeline.

Buy

Low differentiation + general data + limited team + urgent timeline.

Hybrid — Build core, buy periphery

High differentiation + sensitive data + limited team.

Buy + Private deployment

Low differentiation + sensitive data → buy with on-premise or private deployment.

The middle cases are the hardest. When differentiation is moderate and data sensitivity is mixed, the answer is often: buy the foundation, build the differentiation layer on top. Use a pre-trained model via API for the heavy lifting, then fine-tune or add a custom orchestration layer for the part that makes your product unique.

The Hybrid Pattern

In practice, most mature organizations end up in a hybrid model. They buy compute and base models (OpenAI, Anthropic, Ollama for on-prem), they build the orchestration, context engineering, and domain-specific fine-tuning, and they buy supporting tooling (evaluation, monitoring, vector databases). The build-vs-buy question isn't answered once — it's answered per component.

Framework 3: True Cost Modeling

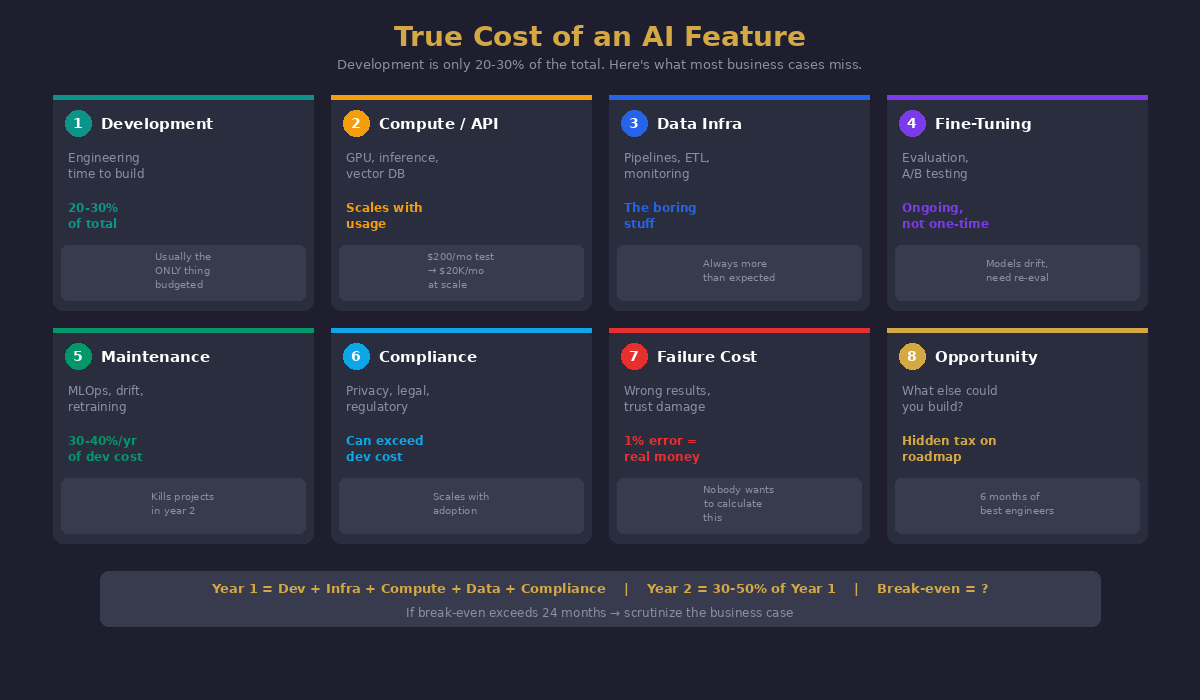

Martin Casado, partner at Andreessen Horowitz, wrote in his analysis of AI application economics: "AI applications look like software businesses but have the gross margins of services businesses." The infrastructure cost compounds in ways traditional software doesn't. If you budget like a SaaS build, you'll run out of runway like a consulting firm.

This is where most AI business cases fall apart. The cost model presented to leadership typically includes compute costs and development time. The actual cost includes at least eight categories, and the ones that get missed are usually the ones that kill the project.

The Eight Cost Categories

- 1. Development Cost: The engineering time to build the feature. This is what everyone budgets. It's usually 20-30% of the total cost.

- 2. Compute / API Cost: GPU instances for training, API calls for inference, vector database hosting. This scales with usage — every customer interaction has a marginal cost. Model this as a per-request cost, not a monthly fixed cost. A feature that costs $200/month in testing can cost $20,000/month at scale.

- 3. Data Infrastructure Cost: Data pipelines, ETL jobs, storage, data validation tooling, monitoring. This is the "boring" infrastructure that nobody budgets for but everything depends on. Include the cost of cleaning your existing data — it's almost always more than expected.

- 4. Fine-Tuning & Evaluation Cost: Model evaluation pipelines, A/B testing infrastructure, human evaluation for quality scoring, red-teaming for safety. This is ongoing, not one-time. Models need re-evaluation as data distributions shift.

- 5. Maintenance & Model Operations Cost: Model drift monitoring, retraining pipelines, prompt version management, rollback capabilities. Budget 30-40% of initial development cost per year for ongoing maintenance. This is the cost that kills projects in year two.

- 6. Compliance & Legal Cost: Data privacy assessments, regulatory compliance reviews, terms of service updates, liability insurance considerations. For regulated industries, this can exceed development cost.

- 7. Failure Cost: What happens when the AI feature produces incorrect results? Customer trust damage, support ticket volume, potential liability. Model the cost of a 1% error rate, a 5% error rate, and a catastrophic failure. This is the cost nobody wants to calculate but the one that matters most for the go/no-go decision.

- 8. Opportunity Cost: What else could your engineering team build with the same time and budget? If the AI feature requires six months of your best engineers, what are you not building during that period? This is the hidden tax on AI projects that competes for roadmap attention.

The Cost Model Template

For any AI feature proposal, require these three numbers before approving:

- Year 1 Total Cost: Development + infrastructure + compute + data prep + compliance. This is the "build it" cost.

- Year 2 Ongoing Cost: Maintenance + compute at projected scale + evaluation + retraining. This is the "keep it alive" cost. It should be 30-50% of Year 1.

- Break-Even Point: At what usage level does the revenue or cost savings from the AI feature exceed the cumulative cost? If the break-even is beyond 24 months, scrutinize the business case carefully.

The Integration: How These Three Frameworks Work Together

The sequence matters:

- Data Readiness first: If your data isn't ready, nothing else matters. Stop here and invest in data foundations.

- Build vs. Buy second: Once you know your data is ready, decide the right delivery mechanism.

- Cost Modeling third: Once you know what you're building (or buying), calculate the true total cost.

Each framework can produce a "stop" signal. Data not ready? Stop. No differentiation value? Buy, don't build. True cost exceeds projected value? Don't do it at all.

The discipline to stop is more valuable than the capability to start. The most expensive AI feature is the one you built without asking these questions first.

A Note on Trends

As Cassie Kozyrkov, former Chief Decision Scientist at Google, has noted: "The biggest risk in AI isn't the technology failing. It's the organization not knowing what question they're trying to answer."

Every quarter, a new AI capability becomes the industry's shiny object. Retrieval-Augmented Generation. Agentic workflows. Multimodal AI. Each one is genuinely powerful. And each one is also a potential trend trap if adopted without answering these three questions first.

The pattern I've seen repeatedly: leadership sees a competitor announce an AI feature, panics, and fast-tracks a similar initiative without data readiness assessment, build-vs-buy analysis, or true cost modeling. Six months later, the feature ships. Twelve months later, it's quietly deprecated because the maintenance cost exceeded the value, the data quality produced unreliable results, or the vendor they chose raised prices 300%.

Don't be that organization. Chase value, not trends. And use these frameworks to tell the difference.

At TM-Tech Alliance, we help organizations evaluate AI features with the rigor they deserve — from data readiness assessments and build-vs-buy analysis to true cost modeling and production rollout. If you're sizing up an AI initiative, let's talk.

Share this post

Instagram doesn't support direct web sharing — we copy a ready-to-paste caption to your clipboard.